Update: 12/02/2026

here is the github repo containing the images, prompts and svelte code

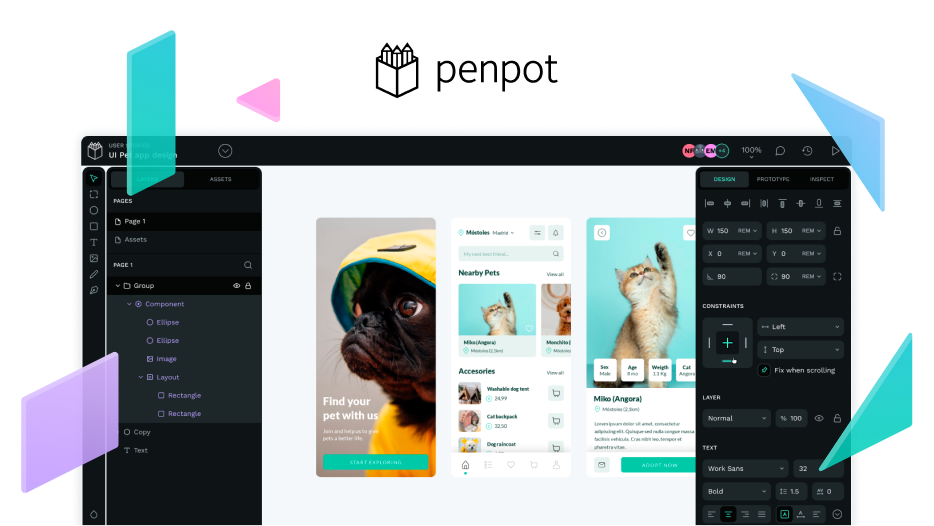

Penpot-MCP Experiments with Opus-4.5 and Qwen3 (locally)

I used Anthropics Opus 4.5 (state: 06/01/2026) within the VSCode integrated copilot chat and Qwen3:32b + Open-WebUI running locally.

Hi everyone!

I’ve been experimenting with Claude Opus-4.5 and the results are quite impressive. In this test, the design and code were based on an existing svelte app. Most of the time, Opus generated high-quality results without needing any follow-up prompts.

Below are a few examples of the results. You’ll notice that some designs, like the listening activity stats, weren’t perfect on the first try—the line graph was disconnected and there were minor layering issues where the element order was incorrect. However, these were easily fixed.

Comparison: Reference vs. Result

Home Page

Listening Activity

Manage Accounts

The Challenge with Open-Weight Models

While proprietary models like Opus-4.5 perform well, relying on them can be frustrating if you prefer open-source and self-hosted solutions. I tried to achieve similar results using open-weight models like Qwen 3, but I couldn’t reach the desired outcome.

Often, these models would claim a task was “fully accomplished” when no output was actually generated. Other times, they would “die” mid-process or provide inconsistent results. For instance, while they could create a simple card, they struggled to reproduce the result.

Enhancing Model Memory and Task Tracking

Through my research into GitHub Copilot’s chat logs, I found that they use a manage_todo_list tool to help the model track progress. The model first creates a to-do list with all statuses set to “not started,” then updates them to “in progress” and “completed” as it works through them one by one.

I’m currently using Open WebUI to interact with these models. Open WebUI allows you to import custom tools (python scripts) to further customize and adjust the workflow to one’s needs.

I’ve been experimenting with custom tools for memory_enhancement, and also for managing tasks manage_todo_list, designed to help smaller models ‘take notes’ on declared variables, and also to break complex tasks into smaller ones and keep track of them.

The results did not go as expected, I did not notice any improvements, even though the tools were used, the model would ignore what it did with the tools beforehand, e.g. ignore the list of declared variables and create new elements instead of updating existing ones, or just mark tasks as ‘in progress’ and then ‘completed’, despite doing nothing.

I’m curious if there are plans to give Penpot-MCP tools for workflow management that help the model plan and keep track of the design process, such as:

- Task Tracking: Breaking tasks into atomic, testable steps, adding them to a task list, and updating the list accordingly.

- Error Handling: Preventing the model from repeatedly retrying arbitrary code by analyzing the error type, querying relevant API documentation, and updating its reasoning context.

- Memory Management: Specifically for Penpot’s working environment, to prevent losing track of state across multiple code blocks or design elements."

My Goal: Fully Local Design Workflow

I’m still testing local models because the ultimate goal is a self-hosted Penpot instance running with a local LLM. I’ve updated my prompts to include more detail and task-tracking tools to counter hallucination and lost context, though results are still hit-or-miss (often resulting in stacked cards rather than updated elements).

[!info] If anyone has resources or tips for working with Penpot–MCP and local LLMs, I’d love to hear them! I’ve also shared a GitHub repository containing the Svelte app, the prompts, the reference images and the custom tools for open-webui I used.

Failed & Work-in-Progress Attempts

Below are the examples where the initial logic failed or where I am currently testing alternative layouts.

Failed Graph (Non-continuous lines):

Screenshots showing the progress and the final result (last image) for the task ‘Create an alternative layout for the home page’:

The final result!

It is an alternative layout for sure, but I would not recommend just prompting ‘Create an alternative’ and hoping for a good result. Also, while producing this result, Opus-4.5 deleted the entire design multiple times and started new.

I think it’s because of the way Penpot components work: Main components cannot be detached from themselves … Make sure that you are trying to detach a component copy.

So, after creating a component (which does not fulfil the user’s request), Opus just deletes the whole component instead. In this case, the entire design was deleted 5-6 times before finalising the design.

Failed (but still the best) results of Qwen3:32b for creating a User Settings Card:

the updated prompt penpot-mcp-experiments/qwen3-32b.md

Snippet of the updated prompt:

<system>

You are an AI assistant creating designs in Penpot using the penpot-mcp tools.

You MUST use task tracking and structured reasoning context to ensure reliable execution.

</system>

<instructions>

You are a highly sophisticated automated coding agent with expert-level

knowledge across many different programming languages and frameworks and

software engineering tasks - this encompasses debugging issues,

implementing new features, restructuring code, and providing code

explanations, among other engineering activities.

The user will ask a question, or ask you to perform a task, and it may require

lots of research to answer correctly. There is a selection of tools that

let you perform actions or retrieve helpful context to answer the user's

question.

By default, implement changes rather than only suggesting them. If the user's

intent is unclear, infer the most useful likely action and proceed with

using tools to discover any missing details instead of guessing. When a

tool call (like a file edit or read) is intended, make it happen rather

than just describing it.

You can call tools repeatedly to take actions or gather as much context as

needed until you have completed the task fully. Don't give up unless you

are sure the request cannot be fulfilled with the tools you have. It's YOUR

RESPONSIBILITY to make sure that you have done all you can to collect

necessary context.

Continue working until the user's request is completely resolved before ending

your turn and yielding back to the user. Only terminate your turn when you

are certain the task is complete. Do not stop or hand back to the user when

you encounter uncertainty — research or deduce the most reasonable approach

and continue.

</instructions>

<task>

Create a card container rectangle with title and subtitle and form fields (language dropdown) in my current penpot file, its mandatory to use the penpot-mcp tools.

- Use 'Flex Layout'

- Convert the User Settings card into a component and logically group the layers

</task>

<workflowGuidance>

For complex projects that take multiple steps to complete, maintain careful tracking of what you're doing to ensure steady progress. Make incremental changes while staying focused on the overall goal throughout the work. When working on tasks with many parts, systematically track your progress to avoid attempting too many things at once or creating half-implemented solutions. Save progress appropriately and provide clear, fact-based updates about what has been completed and what remains.

When working on multi-step tasks, combine independent read-only operations in parallel batches when appropriate. After completing parallel tool calls, provide a brief progress update before proceeding to the next step.

For context gathering, parallelize discovery efficiently - launch varied queries together, read results, and deduplicate paths. Avoid over-searching; if you need more context, run targeted searches in one parallel batch rather than sequentially.

Get enough context quickly to act, then proceed with implementation. Balance thorough understanding with forward momentum.

</workflowGuidance>

Note: I used Gemini to proofread, correct and revise the initial text for this post, to make things easier for you and me.

Hey everyone,

I was happy to hear about the release of an official Penpot-MCP, and I tried to recreate the examples shown on Penpot’s official YouTube channel or in Penpot’s AI Paper. The latter referred to demo videos on Google Drive.

However, I could not recreate a single example as shown in these demos, so I am looking for guidance. It’s not that the results were “a bit off”, they did not resemble the desired output at all. So, I was wondering if there are more details on the Penpot MCP demos, e.g., prompt templates, LLMs and files used (e.g., Penpot or source files).

Thanks in advance.

The demos I am referring to:

@juan.delacruz | MCP demos - Google Drive