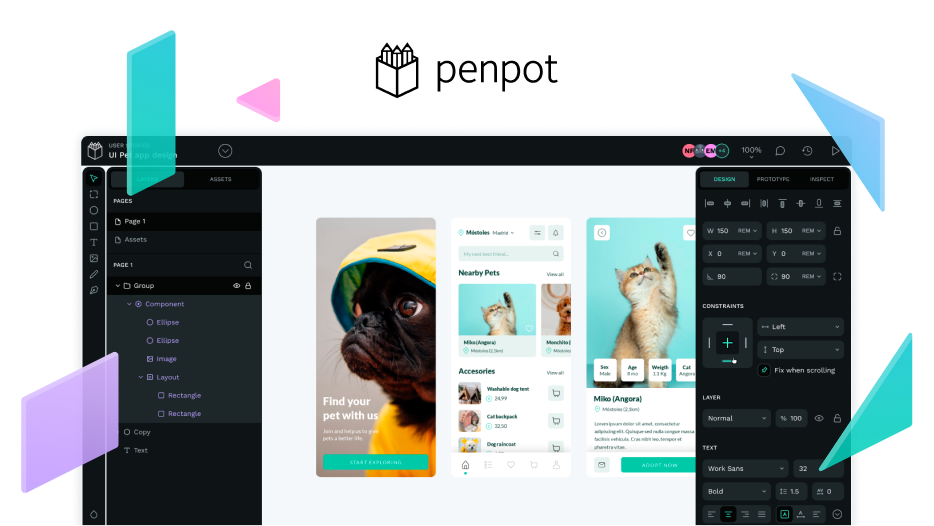

Penpot

@mcp-penpotmcp-penpot create a basic and meaningful prototyping interaction between the screens currently selected in Penpot. Look for possible interaction elements, such as buttons with text or icons, or cards that can act as interaction triggers to navigate between@mcp-penpotboards.

@mcp-penpot take the HTML / CSS generated from <> and recreate it in my current Penpot file. Do not just draw boxes, use ‘Flex layout’ for the containers, covert the buttons and other action triggers into components, and logically group the layers.

Generate a Designs System documentation file from a Penpot file:

As a Code Generation AI specialised in Design System documentation, your task is to fully extract the design system data from the provided Penpot file / API response and generate a single, comprehensive markdown file (~/AI/mcp-servers/design-system-documentation.md).

The output must be structured with the following, mandatory sections including all available detail:

-

Introduction & Overview - A brief, professional introduction to the Design System (DS) and its purpose (eg. Consistency, scalability). Specify the source Penpot file, project name and date of extraction.

Source Penpot File: [Insert Name of Penpot Project/File]

Date of Extraction: [YYYY-MM-DD]

-

Design System Colors - A comprehensive colour palette. Document semantic color with usage notes &colors with RGB, Hex & HSL values.

-

Design Tokens: Typography - A complete typography system featuring font-family, text styles (font-size, weight, line-height & letter spacing), typography scale based on Minor Third ratio.

Include details usage guidelines.

-

Design Tokes: Spacing & Layout - Full spacing & Layout specifications:

6-level spacing scale (XS to 2XL) in px and rem.

12-column grid system with gutter and margins.

Component dimensions table.

5 responsive breakpoints.

-

Design Tokes: Depth & Effects - Shadows & radius tokens:

Shadow elevation with full CSS box-shadow value

7 border radius values from 4px to 80px.

Opacity values for various shapes.

-

Component Inventory: Details of major components:

Button (3 variants: Primary, Secondary, Icon:Text).

Input field (with search variant).

Card (Main card, Related card with dimensions and shadows).

Modal/Dialog (Slide in).

Navigation item (4 navigation types).

Category Pill (with 4 different states).

Tabs(with 4 different states).

Banner Carousel (width fade transition, and position indicator).

Additional components (e.g. Headers).

-

Additional guidelines - Responsive behaviour, Accessibility, Performance, Usability and design principles.

-

Implementation guidelines - CSS Variable example and HTML component usage.

Critical Requirements

Use Markdown Tables extensively for structured data (Colors, Typography, Spacing, Breakpoints).

All extracted values must be presented with their numeric value and unit (eg. 16px, 1rem, 0.75).

Ensure all sections are present and populated with the available data from the Penpot extraction process).

Use descriptive markdown headings (#, ##, ###) for clear hierarchy.

DO NOT GENERATE ANOTHER FILE TO DOCUMENT THE PROCESS, JUST GENERATE design-system-documentation.md file.

Context: The Design System foundations (Color and Typography tokens) have already been synchronised between Penpot and the codebase (CSS variables/ utility classes etc.) The LLM has access to the Penpot Model Context Protocol (MCP) and the design-system-documentation.md file. Do not generate extra documentation of the process. Once you finish show me the result opening the html in the internal browser in VSCode.

——

Generate a complete, single-page solution for a standard User Login Screen. Add the files to my project.

The output must consist of two separate, non-documentary code blocks only:

HTML (semantic and structured).

CSS (modular and token-based)

The size of the login screen must be mobile.

Design & Consistency restraints:

Layout/Aesthetics: It must adhere to to the overall aesthetic, visual hierarchy and layout style present in the Penpot file’s main screens.

Typography: Use the defined Text Style tokens.

Spacing: Apply spacing tokens for padding, margins and gaps within the form and container.

Components: Leverage properties for input fields, buttons & card containers (like border-radius and box-shadow tokes) as defined by the system.

Output constraint: DO NOT generate an introductory text, explanations, notes or markdown tables).

============

1. Introduction & Overview

This Design System (DS) serves as the foundational, single source of truth for the [Project Name] user interface and experience. Its primary purpose is to ensure visual consistency, improve collaboration between design and development teams, and enable scalability across digital products.

By utilizing pre-defined components, typography, and color tokens, this system reduces redundant work and accelerates development cycles.

Source Penpot File: [Insert Name of Penpot Project/File]

Date of Extraction: [YYYY-MM-DD]