Generative AI for LLMs is now commonplace and we have seen how quickly they’re capable of producing statistically pleasing output, whether it’s (some form of) text or image. The abundance of raw data has allowed unsupervised training to be able to wonderfully interpolate across a massive solution space and get you one (or four) useful blob at a time.

But what about highly structured data? What about semantically rich code structures that convey not only logic and behaviour but also visual meaning?

Beyond relatively simple boilerplate scaffolding or short code snippets, the reality we’re facing is that there’s still a ton of work to do before anyone can crack this problem.

That is why today we’re sharing our 5 AI open challenges with the broader open source design community. Fueled by our company-wide AI hackathon last April and the help of AI/ML company Neurons Lab, we have devised these challenges as a way to frame very concrete “what if”'s that make use of existing data.

Summary of all 5 challenges!

You’ll find that every challenge starts with a clear purpose around an intimate design and code relationship before documenting one or more approaches for various use cases. You’ll get a very accurate idea of what the particularly relevant “state of the art” for that challenge/approach is as well as some starting tips.

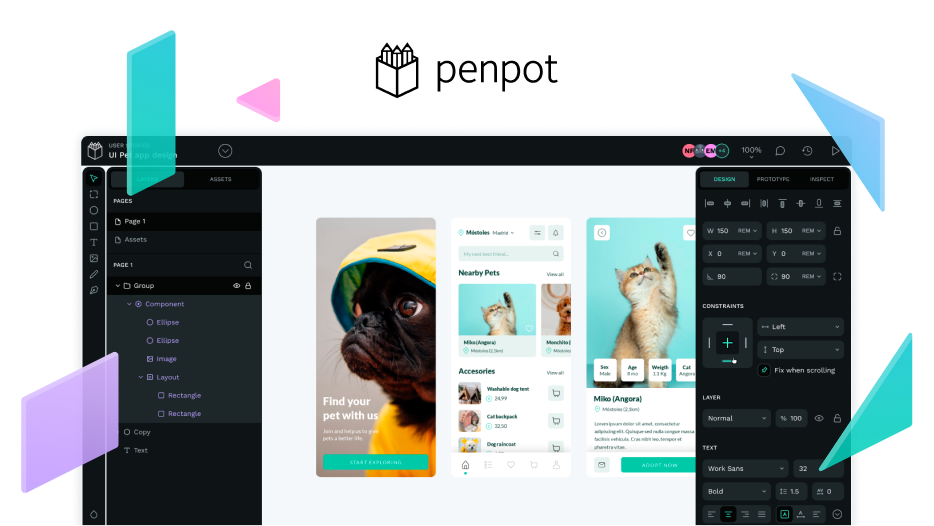

Slide shown at my Penpot Fest’s keynote showing QR codes for all 5 challenges

Everyone loves to share some hype every now and then and catch everyone by surprise with some cool unexpected breakthrough. And yet it’s also equally important to demystify this whole “AI for design” space and make it as accessible as possible for everyone. We’re counting on you to bring the best of the open source ethos to AI design & prototyping! Now, go fork these repos and see how far you can get!

- Design Co-pilot

- UX to Documentation Generator

- Design System Advisor

- Content Generator

- Generative-based Co-pilot

You can go straight to the Keynote section where I go through all this with some nice mockups!

8 Likes

Hey! Apologies if this is off-topic.

A lot of the possibilities listed in the listed challenges sound very exciting to me. Component generation makes me think of auto generating things like hover and focus states for components, UX to documentation would truly be amazing…

But I have worries, too. Is there some sort of positioning from the Penpot team on the kind of ethical issues surrounding AI, specifically about the sourcing of training data?

Especially looking at challenge #4, text generation from LLMs such as ChatGPT and text-to-image functionality such as from Midjourney are powered by unethically scraped data…

Whenever I see design apps add integrated “text-to-image” functionality, I think of my artist and illustrator friends who are losing jobs to machines that make a pastiche of their work without their permission.

I am not fundamentally anti-AI and I understand there is much potential in it as a tool, but I would like to see discussion/recognition of these issues.

If this has already been discussed by the Penpot team before and I have simply missed it, I apologize

4 Likes

Hey @meroron ! I don’t see why your comment could be considered off-topic. I think you’re absolutely right in raising those concerns and we share them at Penpot. We have regular discussions about them.

This is why we’re sharing everything we’re coming up with, from ideas to actual code you can test, instead of building “magical stuff” behind the scenes. We’re committed to open source and ethical AI and that includes the way you collect data.

That’s also why we were so excited to have Iván Martínez, creator of PrivateGPT, give a workshop at recent Penpot Fest on how to use open source AI and your own data to provide you with an ethically fully owned solution.

It was 1997 when I first learned about Free & Open Source Software and its core relationship with rights/freedoms and ownership through licensing has always marveled me. Shortly after I saw how open source cryptography was challenged as not being “secure” enough. Some people were trying to find a “middle ground”. Today that’s not even a question, we can have full transparency and security at the same time. I think for open source AI there won’t be a “middle ground” either.

3 Likes

Great question @meroron ! I wonder if ethics are accounted for in the “Risks” rating (I’ll have to rewatch the talk).

Is there a writeup anywhere for this workshop with a step by step? I’d love to give it a try too.

Any updates on it? From what I’ve read I imagine that Penpot 2.0 will not present any AI feature. Did you have any further discussions ?

Yes! We have been very busy about this and we have changed our approach. While 2.0 won’t bring any specific AI bits, we’re working hard on a fresh perspective that will be shared very soon (expect May).

1 Like

I think https://uizard.io has some of the best UI/UX AI experiences. If PenPot could do similar things, I would definitely switch to PenPot.